AirFlights Bronze Layer

Tools: Databricks | SerpAPI | Pandas | PyArrow | PySpark | Delta Lake | datetime

Description:

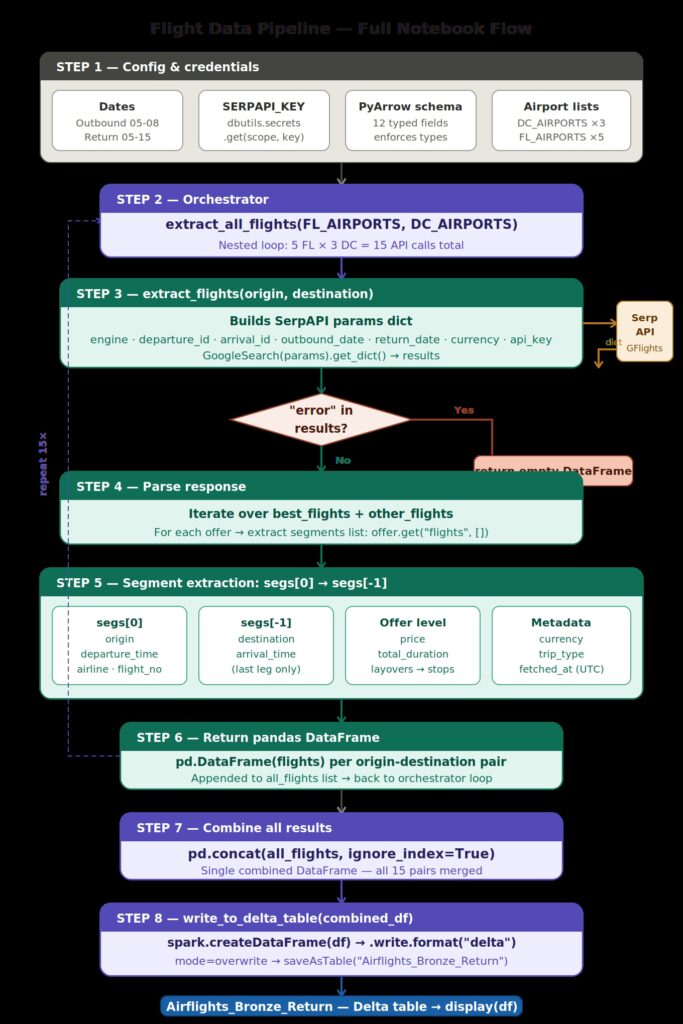

This layer is responsible for extracting and storing raw flight data as-is, with no transformations or business logic applied. It consists of two identical notebooks: the first extracts outbound flights between the defined origin airports (DC metro — IAD, DCA, BWI) and destination airports (Florida — 5 airports), and the second runs the same logic in reverse to capture return flights. Each notebook calls SerpAPI’s Google Flights engine across all airport combinations (15 API calls per run), parses the raw response into a flat pandas DataFrame enforced by a PyArrow schema, and writes the result to a Delta table in Databricks. The raw fetched_at timestamp on every record ensures full traceability back to the moment of ingestion.

Saved as:

- Airflights_Outbound — DC → FL flights

Airflight_Return— FL → DC flights

The configuration and setup

— SerpAPI is a service that scrapes Google search results (including Google Flights) and returns structured data SERPAPI_KEY is retrieved securely from Databricks Secrets rather than hardcoded

PyArrow Schema

This defines the structure of your Delta/Parquet table before any data is written.

Each field specifies a column name and its data, Defining the schema upfront enforces type safety — bad data gets caught at write time rather than causing silent corruption downstream.

Airport List

The three major airports serving the Washington D.C. metro area — Dulles, Reagan National, and BWI. This list is probably looped over later to search flights from each airport, giving you price comparisons across all three.

Code Explanation

This defines three functions that together form the extract and load step of your Bronze ingestion pipeline.

extract_flights(origin, destination)

Fetches flight data for a single airport pair from SerpAPI.

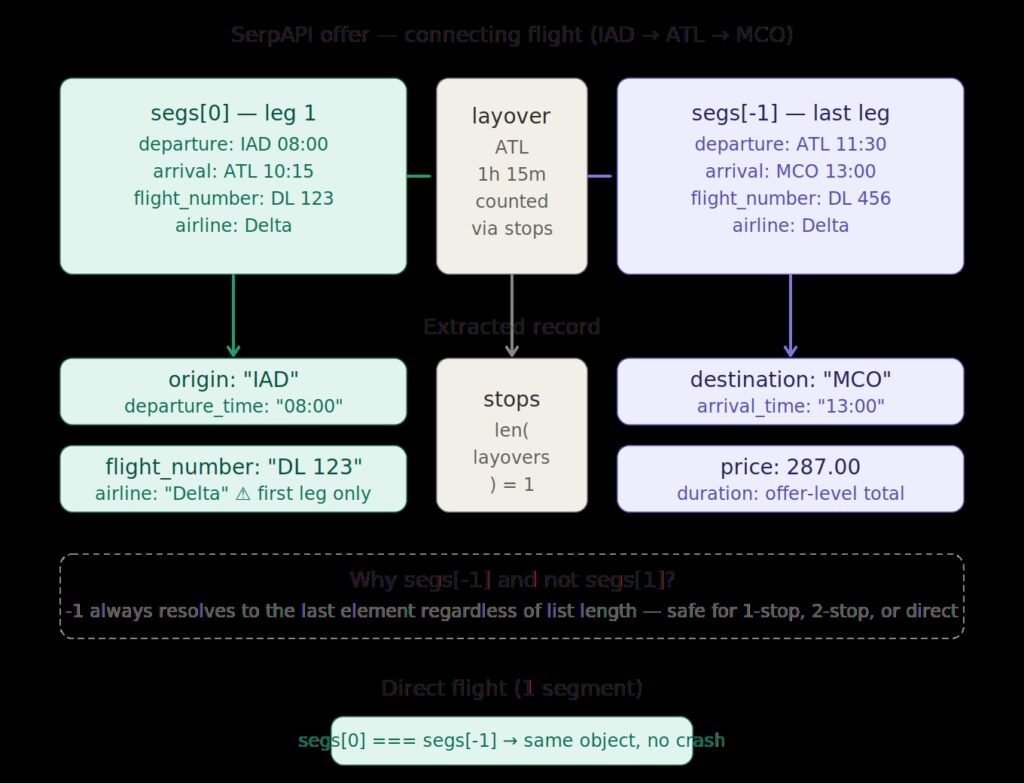

Sends a request to SerpAPI’s Google Flights engine and gets back a dictionary of results. For each flight offer, it grabs segs[0] (first segment) and segs[-1] (last segment) .

Parsing the response:

SerpAPI returns two buckets of flights:

other_flights — remaining available options

best_flights — Google’s top recommended options

write_to_delta_table(df, table_name)

Converts the pandas DataFrame to Spark and writes it as a Delta table.

mode("overwrite") replaces the table on each run — appropriate for Bronze since you’re re-fetching fresh raw data. This would need to change to append or merge if you wanted to track history across runs.

extract_all_flights(origins, destinations)

Orchestrates the full extraction across all airport combinations.

df = extract_flights(origin, destination)

The function accepts origins and destinations as parameters but ignores them — the loop hardcodes FL_AIRPORTS and DC_AIRPORTS directly.

It collects all non-empty DataFrames, concatenates them with pd.concat, then writes once to Airflights_Bronze_Return.

Execution Flow

extract_all_flights(FL_AIRPORTS, DC_AIRPORTS)

└── for each of 15 pairs (5 FL × 3 DC airports):

extract_flights(origin, destination)

└── SerpAPI call → parse best + other flights → pandas df

└── pd.concat all results

└── write_to_delta_table → "Airflights_Bronze_Return"

└── display(df)

One thing worth noting: flight_number and airline are always taken from segs[0] (the first leg). For a multi-segment itinerary operated by different airlines on different legs, those fields only reflect the first carrier — that's a minor data limitation in the current implementation.